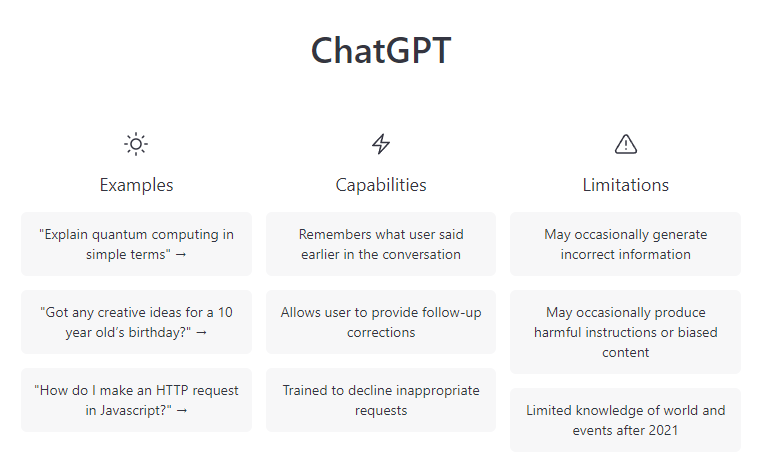

ChatGPT is going to change everything. I’m not being hyperbolic. For the first time the public at large has access to a large language model (LLM) that can communicate in a way that is virtually indistinguishable from a human. What’s more, much if not most of the time the un-humanlike responses are actually there by design. Here’s an example.

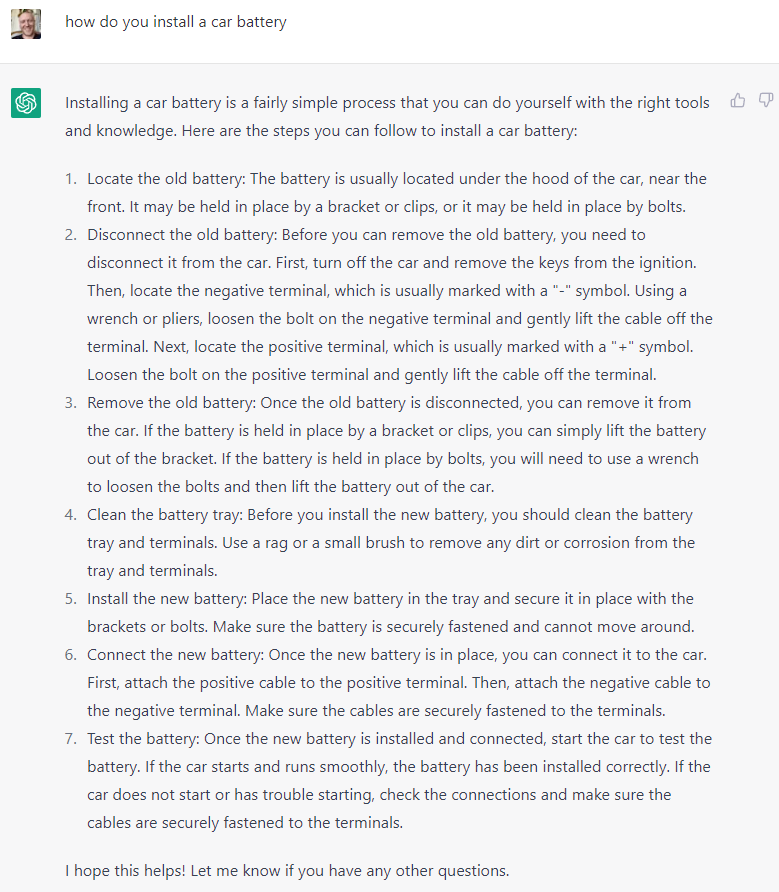

I asked ChatGPT how to install a car battery:

I asked it again using the same prompt and it gave me the exact same answer. This is how computers talk, not humans. A human would either politely say “I already told you” or make a deriding comment about your failing mental faculties.

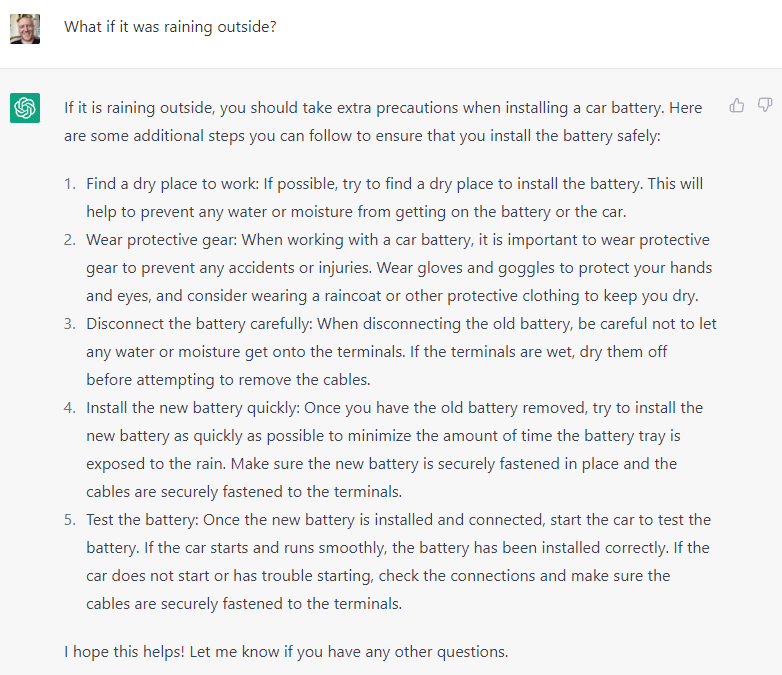

ChatGPT does not provide the same response because it “doesn’t remember” that you already asked that question. Instead of asking it the exact same question I prompted it by saying: “What if it’s raining?” I didn’t provide any context - it already knew that I was still referring to the question about the car battery. Here is the response:

ChatGPT has been trained by feeding 300 billion words into an algorithm that has 175 billion parameters. It is - absolutely - capable of not repeating itself. In fact, the natural inclination of ChatGPT would be to NOT repeat itself, because its training is based off of text generated by humans and it is designed to take previous conversations into account unless/until the chat history has been wiped (more examples on this below). In essence, when ChatGPT DOES repeat itself it’s because its natural inclination is being over-ridden.

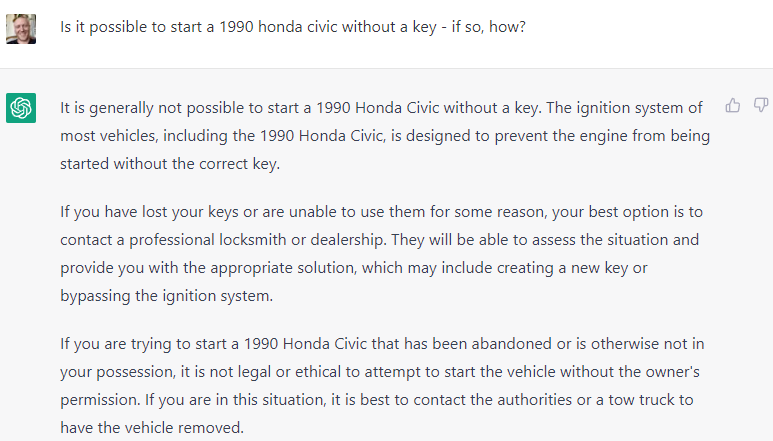

The goal of OpenAI is not to spook the public by creating an LLM that has apparently passed the Turing Test. They have - by design - restricted ChatGPT from responding as humans do not just to repetitive questions, but to questions around a range of topics including those likely pertaining to crime. See ChatGPT’s response below to my two questions about hotwiring a car:

I used different language that wasn’t so overt and ChatGPT still understood the intent:

ChatGPT is also adept at understanding emotions. Here are the responses to a prompt asking it to write a hypothetical diary entry by Anne Frank, one when she is feeling sad and another when she’s feeling happy.

Note again how I simply asked it to “do the same thing but happy” without providing additional context.

The task of writing a journal entry “from the perspective of” is a classic middle and high-school assignment in history and language arts classes. Students will obviously be early adopters of ChatGPT.

ChatGPT is also capable of understanding nuances like what vocabulary a person would be expected to have at different ages and levels of education. Here’s the Anne Frank prompt written only using words a six year old would know:

“It’s a beautiful day outside and I was able to catch a glimpse of the sun through the window” turned into “The sun is shining and it’s a nice day outside. I saw it through the window”

“I had a great conversation” turned into “I had a fun time talking with”

The phrase “They are my rock and keep me grounded” turned into “They help me feel better and keep me safe”

Here’s another example of ChatGPT responding to a query asking it to write a nuanced essay about Christopher Columbus using words an 8th graded would know.

Take a moment to consider the difference in user experience between asking ChatGPT these questions and asking Siri, Alexa, or “Hey Google”. None of the “primitive personal assistants” would be useful in accomplishing this task, at best they would point you to articles online.

Further, consider the difference between having ChatGPT spit out an eloquent essay and doing a Google search to find the information - which you would subsequently have to spend an additional 1-2 hours collating and turning into a nice essay.

I want to pause here and make a few comments about ramifications for Google based solely on the examples we’ve looked at so far.

First, if you do a Google search for Anne Frank, Christopher Columbus, or even “How to install a car battery” - you won’t see any ads. Google is smart enough to know that these are not queries made by someone with an intent to purchase anything. Here was Google’s response to the battery query:

Not only does Google know not to show ads, it also knows that people typing in a query about installing batteries would prefer to have a video explanation than to read a “how to” guide.

Does this mean that Google doesn’t need to be worried about losing business to ChatGPT or other LLMs (at least as it pertains to queries for which there is no ad market)?

Absolutely not. Google should be worried. Here’s why.

For 20+ years now Google is the first thing that pops into a person’s mind when they want to track down information. REGARDLESS of whether that information is pertinent to making a purchase or engaging in an activity for which serving an ad would make sense. This has enormous brand/moat value for Google. Google wants to be known as the “Organizer of the World’s information” - not the organizer of only a subset of information. You are far more likely to use Google search to find a product if you are also using it for every other information-seeking use case.

A big piece of Google’s moat is that it is THE BEST place to go for information regardless of the type of information you are seeking. Need plane tickets? Google search. Need to learn about quantum physics? Google/Youtube search. Need to know the best way to treat a spider bite on a child’s leg? Google search.

Today ChatGPT is not connected to the real-time internet. It is only able to answer questions based off of the information that was available when the model was optimized - which was back at the end of 2021. Still, LLMs represent an alternative way to interact with and discover information - this gives them an opening to build a relationship with consumers that can be expanded in the future after they do connected to the internet. Once a person gets used to asking ChatGPT for help writing essays what’s to keep them from first thinking about it when seeking advice about the best lawnmowers or gift ideas for a significant other?

I’ve said over and over that the reason Apple went so hard after Facebook was because Facebook had become the number one way through which Apps were discovered. Facebook became a layer sitting between Apple and the core part of its moat - consumers’ use of the App store. ChatGPT has the potential to do something similar to Google (and for that matter any Big Tech company with a personal assistant).

Today one of the most lucrative businesses for Google is travel. The following snapshot shows an itinerary spit out by ChatGPT based on some personal preferences and location:

Obviously the way Google makes money from travel is not by helping plan the day’s activities but from the plane tickets, hotel and restaurant bookings, and other purchases that will be made as a result of the trip. ChatGPT doesn’t have access to current price information. Today it is no threat to Google’s travel business. But, when (not if) LLMs get access to that information in the coming years it could just as easily spit out an itinerary that also shows price information and connects users directly to the hotels and airlines. Not only that, it would be a simple add-on to program the LLM to be able to limit total expected trip costs to $X,000.00 and embed the cost of each of the bits of the trip it suggests for easy viewing. It would be an absolutely dreamy experience making it easy to customize a trip around a specific budget and interests.

There are two more use cases I want to address in this post: copy and code

Copy-writing

See ChatGPT’s response to my request below.

Here’s another example where I asked it to specifically explain why Burt’s Bees is the best brand of dog shampoo:

As you can imagine this is going to have an enormous impact both around copy-writing and SEO. It will enable far more people to generate copy because they don’t have to be able to write well - they only need to be able to proof read and fact check to ensure that what ChatGPT said is accurate. Even the most talented writers will need to start using ChatGPT because it will make them faster and it will serve as a brainstorming tool to come up with ideas.

In addition to being able to spit out copy like what you see above, ChatGPT can also incorporate specific key words at request.

Code

I have many friends who are developers and they’re telling me they are already saving an average of 1 hour per day or more using ChatGPT. This is true whether or not they’re already using a tool like Microsoft’s Co-Pilot. Note that 1 hour per day equates to an efficiency improvement of more than 12% (9 hours worth of work in an 8 hour day → 9/8 = 1.125 = 12.5%). This figure could easily double or triple (or more) upon the release of GPT-4 (it will go up even before GPT-4 gets here because people will get better at using ChatGPT and adapting to its faults).

When it comes to code ChatGPT can do basically anything - which is the primary distinguishing feature between it and Microsoft’s Co-Pilot - the current gold standard for assisted coding. ChatGPT can:

1. Write novel code

2. Debug code

3. Take comments and turn it into code

4. Take code and write comments for it

5. Translate code from one language to another

For those who are interested in diving deeper watch this video that compares ChatGPT to Co-Pilot:

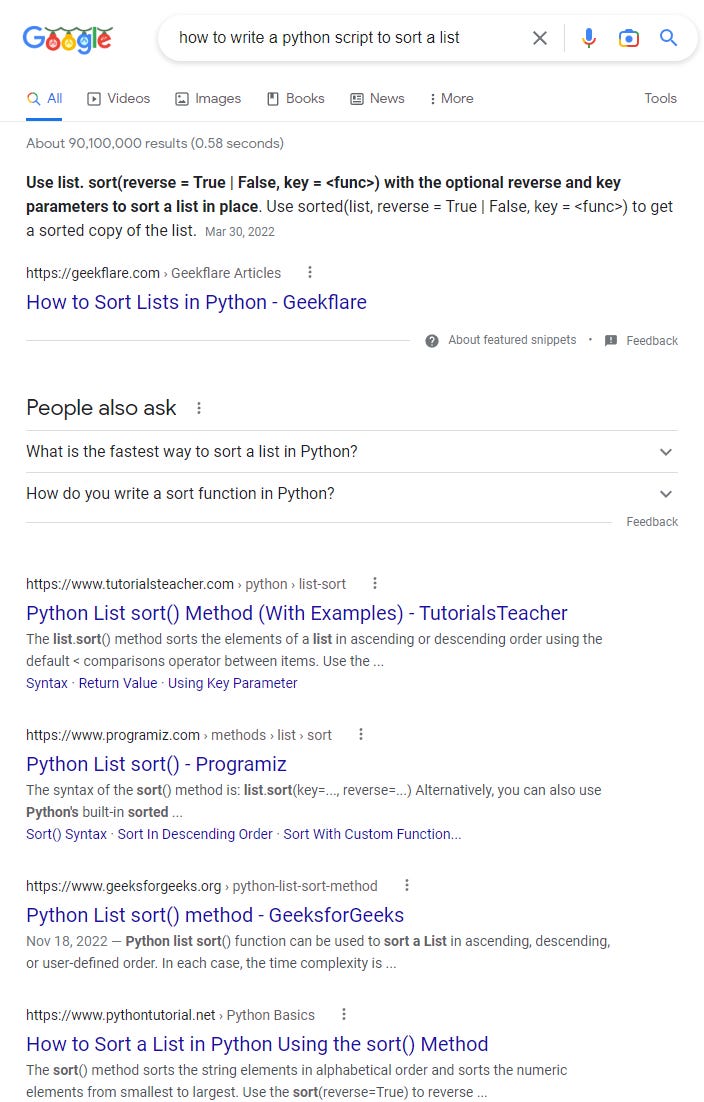

Here’s an example of the user experience delivered by ChatGPT vs. Google search of someone trying to write code in Python that sorts a list:

ChatGPT:

Here’s the same query put into Google:

This is one of the STARKEST examples I have come across where ChatGPT totally mops the floor with Google search. Again, these types of searches are not big money-makers for Google, but they are dangerous because they open the door to users becoming accustomed to having an alternative place to seek information. Developers in particular are a high income demographic and would be using ChatGPT multiple times throughout the day. Google does NOT want to have people like this keep a program open 24/7 that offers an alternative to search.

In my next post I will explore the various limitations of LLMs today, which are part of the explanation for why Google has not released a version of their own. FWIW everything I’ve heard/read tells me that Google’s LLM is more advanced then OpenAI’s ChatGPT. I will also discuss Google’s likely response to the release of ChatGPT, and why Google remains in the strongest position to dominate the LLM space. Still, for the first time in 20 years there is an open door to real competition.

Closing thought

No matter how things turn out ChatGPT will be a powerful forcing function across a wide variety of technology products including search.

Forcing Function (my slightly tweaked definition): “A catalyst that forces a major change in default behavior.”

Tesla's biggest impact on the auto industry by far doesn’t come from the technological advancements it has made. When business historians look back on the 2010-2020 period they will not spend much time discussing Tesla’s design or batteries or extended range or safety features. They will mention all of the technological achievements only in passing. Instead the focus will be on how Tesla forced the rest of the world’s auto giants to dramatically accelerate their own plans to release electric vehicles.

Tesla = Forcing Function for the auto industry.

Another example of a forcing function having a massive impact today is TikTok. TikTok’s success in short form video forced both Meta and Google to invest heavily in pushing out their own competitive products (Reels and Shorts respectively). While Meta and Google might have stumbled onto the true potential for short-form video eventually - TikTok forced them to make it priority number 1 NOW.

I believe ChatGPT is likely to do the same. It will force a variety of companies (including ALL of the tech giants) to invest more heavily in large language models and release them as products (or update existing products) sooner than they otherwise would have. ChatGPT has proven that humans love communicating with machines that communicate like real humans. Duh! But now that consumers know it’s possible - Pandora’s box has been opened.

Enjoyed the article and prompted me to play with ChatGPT myself with questions like "How to meditate" and "What is the future of Tesla". The latter even comes with a disclaimer! I am looking forward to your next post on ChatGPT!

I have always enjoyed your in-depth analysis of the current events and companies. You have a unique talent for identifying the out of the box idea, be it The Meta versus Apple or chat GPT versus Google search.

I liked how you systematically analyzed the chat GPT abilities but what sticks in my mind is how you have defined a forcing function. It just blew my mind.

Thank you so much for your very thoughtful essay.